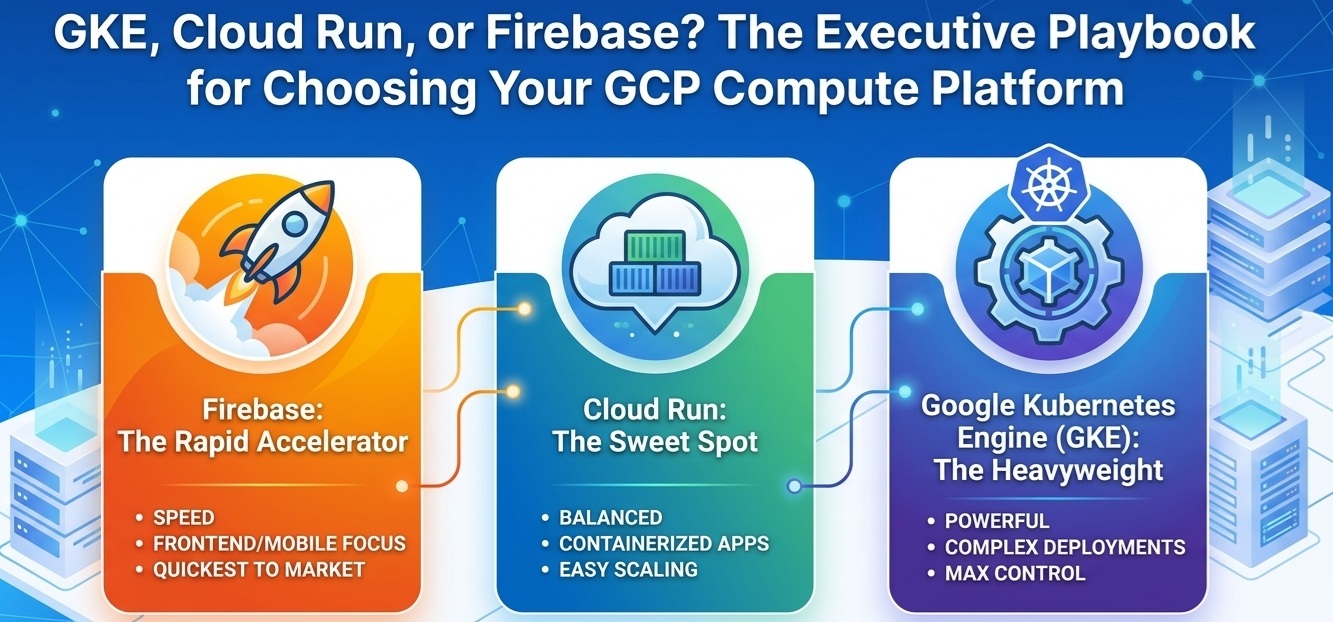

GKE, Cloud Run, or Firebase? The Executive Playbook for Choosing Your GCP Compute Platform

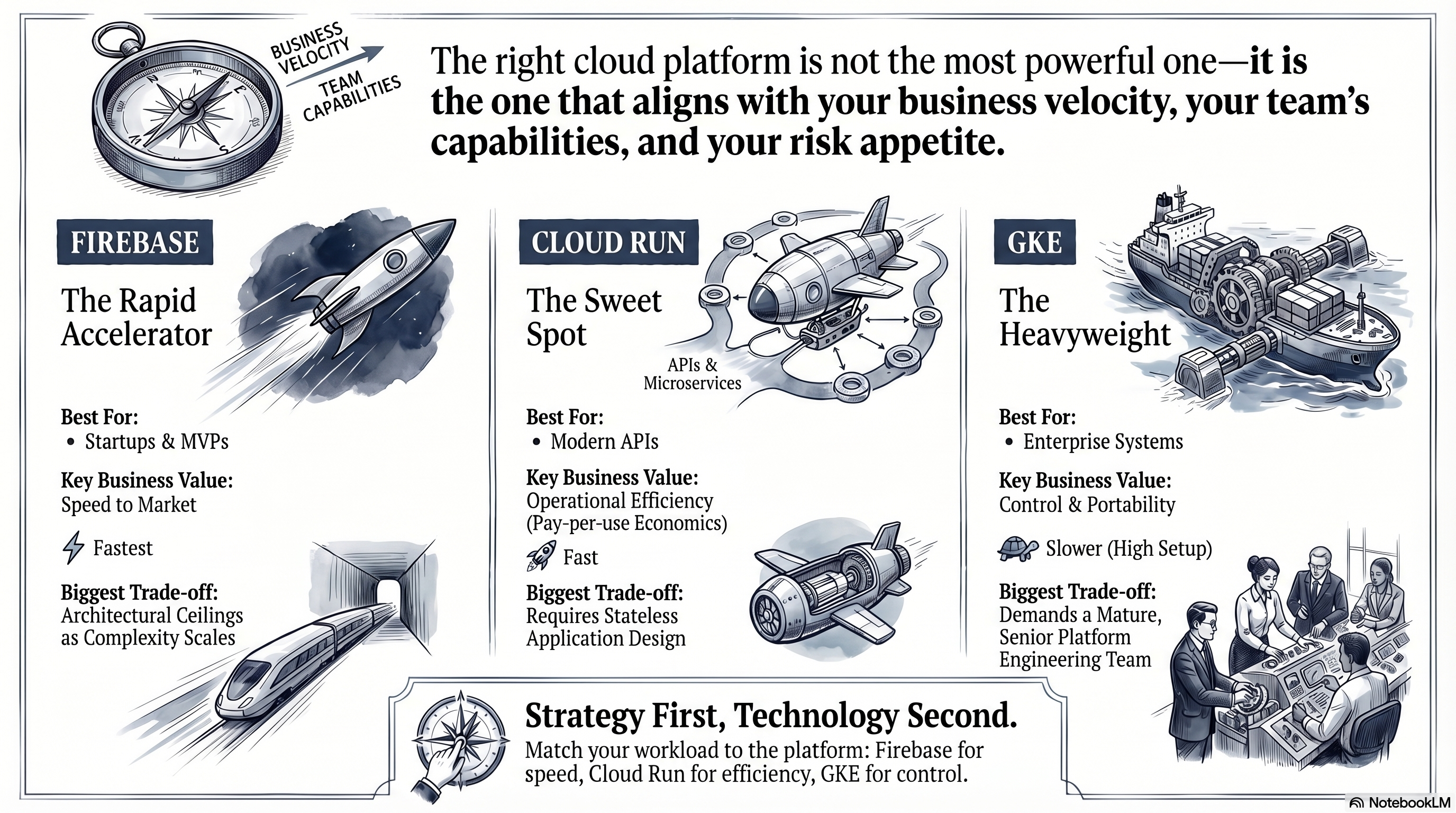

The right cloud platform is not the most powerful one — it is the one that aligns with your business velocity, your team’s capabilities, and your risk appetite.

The Cloud-Native Promise: Speed Without Chaos

Every technology leader building on Google Cloud Platform (GCP) eventually faces the same dilemma: which compute platform do we actually commit to? The stakes are real. The wrong choice can drain engineering budgets, delay market launches, and create architectural debt that takes years to unwind.

The term cloud-native has become a boardroom staple, but its business promise is specific: ship faster, scale on demand, and pay only for what you consume. GCP delivers on this promise through three flagship platforms — Firebase, Cloud Run, and Google Kubernetes Engine (GKE) — each designed for a fundamentally different business context.

This article cuts through the technical complexity. No jargon, no acronyms your team uses in standups but no one explains in the boardroom. Just a clear, opinionated framework to help executive leaders match the right platform to their actual business needs.

Firebase: The Rapid Accelerator

Best for: Startups, MVPs, Mobile & Web Applications

If your primary business objective is getting to market before the competition, Firebase is the most powerful accelerator in Google’s arsenal. It is, at its core, a fully managed application development platform — meaning Google handles virtually every layer of infrastructure so your engineering team can focus exclusively on the product experience.

Firebase removes the need to separately provision databases, authentication systems, file storage, and hosting. These capabilities come bundled, pre-integrated, and ready to use from day one. For a founding team racing to validate a product hypothesis, or an established enterprise launching a new digital product line, this translates directly into weeks or months saved before the first user even signs in.

Key business advantages:

- Extreme Time-to-Market: Engineering teams can ship a fully functional, production-grade mobile or web application in days rather than months.

- Minimal Operational Overhead: No infrastructure team required at launch. Google manages availability, security patching, and global distribution automatically.

- Predictable Entry Cost: The free tier is genuinely generous, making it a near-zero-risk platform for new product bets.

The trade-off executives must acknowledge:

Firebase operates within an opinionated, proprietary ecosystem. As product complexity scales — particularly when business logic becomes intricate, data volumes grow, or regulatory requirements intensify — teams can encounter architectural ceilings that are expensive and time-consuming to break through. Migrating away from Firebase’s data model at scale is a non-trivial engineering effort. Additionally, costs can escalate unexpectedly once usage patterns exceed initial assumptions.

The leadership question: Is the goal to prove a concept and acquire users, or to build a long-term, deeply customized system of record? Firebase excels decisively at the former.

Cloud Run: The Sweet Spot

Best for: Modern APIs, Microservices, Internal Tools, and Event-Driven Workloads

Cloud Run is GCP’s answer to a question most engineering organizations eventually ask: “Can we get the operational simplicity of a managed platform without locking our architecture into a proprietary ecosystem?” The answer is yes — and Cloud Run’s commercial impact has been substantial precisely because it delivers on that promise.

The business model is straightforward: you package your application as a container (a self-contained, portable unit of software), and Google Cloud handles everything else — scaling, load balancing, security, and availability. Critically, Cloud Run scales to zero. When no users are making requests, the platform runs nothing and charges nothing. When demand surges — a viral marketing campaign, a batch processing spike, a seasonal peak — it scales in seconds without any manual intervention.

Key business advantages:

- Pay-Per-Use Economics: You are billed only for the exact milliseconds your application is actively processing requests. Idle infrastructure is a cost that disappears entirely.

- Zero Infrastructure Management: No servers, no virtual machines, no patching cycles. Your engineering team invests its time in product capabilities, not operations.

- Architectural Freedom: Cloud Run runs standard, portable containers. Switching cloud providers or adapting the architecture in the future is far less disruptive than with proprietary platforms.

- Rapid Deployment Velocity: Teams can go from code commit to production deployment in minutes, enabling aggressive iteration cycles.

The trade-off executives must acknowledge:

Cloud Run is purpose-built for stateless workloads — applications that do not maintain session or memory state between individual requests. Systems that require persistent in-memory processing, long-running background operations, or highly stateful workflows require architectural adaptation before they can benefit from Cloud Run. This is typically a solvable engineering problem, but it is a real investment that must be factored into modernization plans.

The leadership question: Are your teams building new services, APIs, or modernizing existing applications that can operate in a request-response model? Cloud Run delivers the strongest return on operational investment in this space.

Google Kubernetes Engine (GKE): The Heavyweight

Best for: Enterprise-Scale Systems, Legacy Modernization, Multi-Cloud Architectures

GKE is Google’s managed platform for Kubernetes — the open-source infrastructure orchestration system that powers some of the world’s largest digital businesses, including those of Spotify, Airbnb, and The New York Times (Google Cloud, 2024). Understanding GKE does not require understanding Kubernetes at a technical level. What matters at the executive level is this: GKE gives your organization maximum control, maximum portability, and maximum scale — at the cost of maximum complexity.

Unlike Firebase or Cloud Run, GKE gives your engineering teams precise control over every dimension of the infrastructure — compute resources, networking topology, security boundaries, deployment strategies, and hardware selection. For organizations running complex, multi-service architectures at enterprise scale, this control is not a luxury; it is a necessity.

Key business advantages:

- No Vendor Lock-In: Kubernetes is an open standard. Applications built on GKE can be migrated to AWS, Azure, or on-premises data centers with significantly less friction than proprietary platforms.

- Unlimited Customization: Regulatory requirements, specialized hardware needs (GPUs for AI workloads), multi-region data residency constraints, and complex networking topologies are all addressable within GKE.

- Enterprise Ecosystem Integration: GKE integrates natively with the full spectrum of enterprise tooling — observability platforms, CI/CD pipelines, security scanners, and compliance frameworks.

- Workload Consolidation: Organizations can run hundreds of distinct services on a shared, efficiently utilized platform, improving hardware utilization and reducing per-service overhead.

The trade-off executives must acknowledge:

GKE demands a dedicated, senior Platform Engineering or DevOps capability. The operational complexity of Kubernetes is well-documented. Organizations that underinvest in this team — or attempt to adopt GKE without it — routinely experience cost overruns, reliability incidents, and delayed delivery cycles. According to the Cloud Native Computing Foundation (2023), Kubernetes adoption challenges consistently center on organizational skills gaps, not the technology itself.

Selecting GKE without the human capital to operate it is one of the most common and costly mistakes in enterprise cloud strategy.

The leadership question: Does your organization have — or have a credible plan to build — a mature Platform Engineering team? If yes, GKE is an extraordinary long-term investment. If no, starting with Cloud Run and evolving toward GKE as the team matures is a far safer path.

Executive Summary Matrix

| Dimension | Firebase | Cloud Run | GKE |

|---|---|---|---|

| Best For | Startups, MVPs, mobile/web apps | APIs, microservices, event-driven workloads | Enterprise systems, legacy modernization, multi-cloud |

| Time-to-Market | ⚡ Fastest | 🚀 Fast | 🐢 Slower (setup investment) |

| Total Cost of Ownership | Low initially; can escalate at scale | Lowest operational cost; pay-per-use | High; requires dedicated engineering team |

| Scalability | High, within platform constraints | High, automatically managed | Unlimited; fully configurable |

| Vendor Lock-In Risk | High (proprietary ecosystem) | Low-Medium (portable containers) | Very Low (open standard) |

| Operational Complexity | Very Low | Low | Very High |

| Key Business Value | Speed to first user | Efficiency and developer velocity | Control, compliance, and portability |

| Biggest Trade-Off | Architectural ceiling at scale | Requires stateless application design | Demands a mature Platform Engineering team |

Conclusion: Strategy First, Technology Second

The most consequential mistake technology leaders make when choosing a cloud compute platform is leading with technology. The right question is never “Which platform is the most advanced?” — it is always “Which platform best serves our current business stage, team capabilities, and strategic roadmap?”

A growth-stage startup validating product-market fit should not be building on GKE. A regulated financial institution with complex data sovereignty requirements and 200 microservices should not be anchored to Firebase. And a mid-market SaaS company looking to accelerate delivery without hiring a platform engineering team has a strong argument for Cloud Run as its primary compute layer.

In most enterprise environments, the answer is not a single platform but a deliberate combination: Firebase for consumer-facing speed, Cloud Run for internal APIs and event-driven workflows, and GKE for the core platform that demands full control. The sophistication lies in knowing which workload belongs where — and being disciplined enough to enforce that boundary over time.

The cloud-native advantage is not just technical. It is organizational. The platforms are ready. The question is whether your engineering strategy, team structure, and investment roadmap are aligned to capture the value they offer.

💬 Which platform is your organization betting on — or are you running a hybrid of all three? I’d be interested in hearing how other engineering leaders are navigating this decision. Connect with me on LinkedIn and let’s exchange notes.

This article is part of the dantas.io tech blog — a space for senior engineering and cloud architecture content aimed at practitioners and technology leaders.

References

Cloud Native Computing Foundation. (2023). CNCF annual survey 2023. https://www.cncf.io/reports/cncf-annual-survey-2023/

Gartner. (2024). Magic quadrant for cloud database management systems. Gartner Research. https://www.gartner.com/en/documents/cloud-database-management

Google Cloud. (2024a). Cloud Run documentation: Overview. Google LLC. https://cloud.google.com/run/docs/overview/what-is-cloud-run

Google Cloud. (2024b). Firebase documentation: Choose a database. Google LLC. https://firebase.google.com/docs/database/rtdb-vs-firestore

Google Cloud. (2024c). Google Kubernetes Engine documentation: GKE overview. Google LLC. https://cloud.google.com/kubernetes-engine/docs/concepts/kubernetes-engine-overview

Google Cloud. (2024d). Google Cloud customer case studies. Google LLC. https://cloud.google.com/customers

Kubernetes. (2024). Production-grade container orchestration. Cloud Native Computing Foundation. https://kubernetes.io/

Ligus, S. (2022). Real-time analytics: Techniques to analyze and visualize streaming data (1st ed.). O’Reilly Media.

McKinsey & Company. (2023). Rewired: The McKinsey guide to outcompeting in the age of digital and AI. McKinsey Digital. https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights

Wiggins, A. (2012). The twelve-factor app. Heroku. https://12factor.net/